AI – The Bridge Between Reality & Hype – Depending on who you speak to – and there are clear camps of opinion motivated by their own agendas and commercial interests at work here – AI is either the true “second coming” or the basis of everything we will build our future society on or an overinflated ideology that is less defined than we realise, fast-tracked by marketing men keen to line their pockets before the bubble bursts.

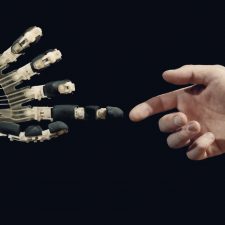

The scaremongers out there will warn us that Skynet is coming to wipe out humanity if we don’t address the problem. But is it really a problem at all? AI is painted out to be a faceless, logic-driven machine that wants to enslave us but in reality, AI doesn’t actually desire or want anything. It doesn’t possess human intelligence and though some argue it learns, it does so based purely upon the instructions we as humans give it.

Unlike the enemies in Terminator, AI doesn’t see things and then learn from them. On the contrary, it learns from the instructions it is given by us and then focuses on those specific areas to learn habits and behaviour but only ever with guidance.

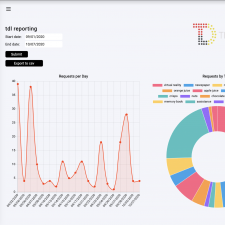

A recent US survey suggested that although around 85% of businesses were talking about AI and machine learning, only 5% were actually doing anything with it.

So are we likely – any day soon – to see a metallic, new world where machinery is king and anything flesh and bone is relegated to second-class status or even slavery?

Probably not, although that isn’t to say at least some of us shouldn’t be worried. It won’t be the physical machines – mainly because of the expense involved in the development and the general lack of maturity in robotics – that will take the jobs. It will be the algorithms.

Any job that involves repetition is immediately under threat and if it hasn’t already been replaced by an algorithm it will likely be within the next decade. Factory workers, sales assistants, and bank cashiers are perfect examples of industries that have been revolutionized by technology and there are many more on the “Algorithmic hit list”.

Of greater concern are the unconscious elements of bias that are being structured into the current algorithms. If we use as an example the lack of women involved in technology roles as a starting point – less than 9% of workers in engineering are women and just 20% are currently represented across Silicon Valley – and apply the general approach a company like Facebook uses to advertise, an algorithm based upon those current trends would be less likely to push messaging or advertising about tech roles to a female user on its platform.

It’s no secret that many loan or mortgage applications now rely completely upon the data held in individual credit files to make an assessment. A human being rarely comes into the equation until it is time to sign off the final paperwork. Where the system can fall down is in documented cases that have shown applicants were refused borrowing based more on their postal code than their own personal credit history.

Where you live should not be an indicator of what you can or cannot afford as much as it should not determine whether you are seen as a higher risk of committing a crime or even being more susceptible to illness. This kind of social profiling – left purely in the hands of an algorithm, without the option for human intervention or empathy – gives me a far greater reason for concern.

I don’t mind living in a world where the technology around me keeps me safe and healthy. There are so many positives that can change my life for the better. The world I’m less interested in is the one that decides my future based on factors that may – in part at least – be out of my control. Or worse still, keeps elements of information from me because I do not fit a certain profile.

That’s far scarier than anything Skynet might threaten to deliver.

DC

Tags: A.I., Future Technologies, Robotics

Share On Facebook

Share On Facebook Tweet It

Tweet It